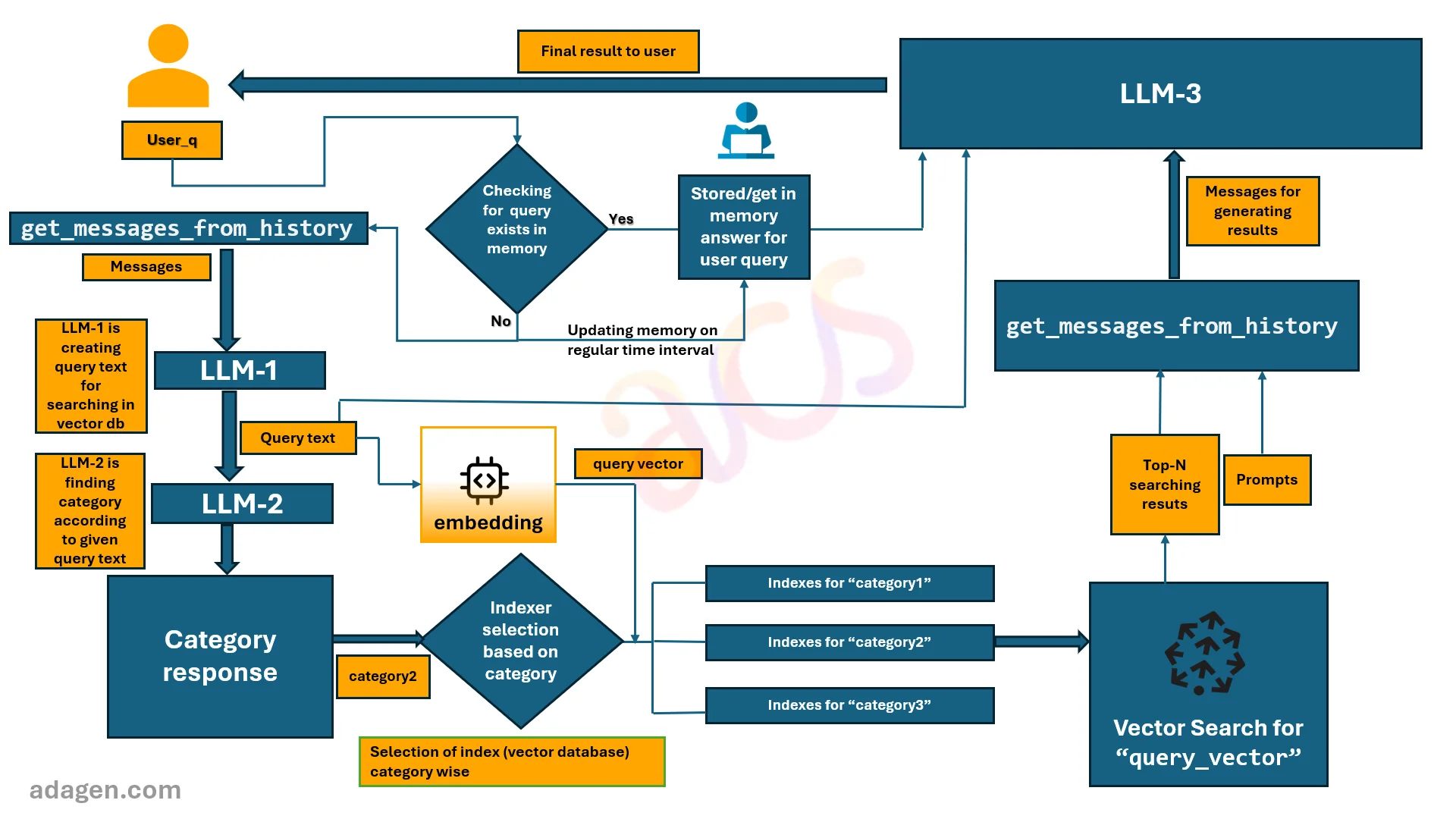

Muti-index RAG system with In-Memory Reinforcement learning from human feedback(RLHF)

In the world of artificial intelligence, LLM (large language model) GenAI Apps have become an integral part of our daily interactions. They assist us in various tasks, from answering queries to providing recommendations. However, are there instances when a user does not provide a proper query, making it challenging for the LLM GenAI App to generate appropriate results?

The Challenge of Improper Queries

LLM GenAI Apps are designed to understand and respond to user queries effectively. However, they sometimes struggle when the user input is incomplete, ambiguous, or simply unconventional. This can lead to unsatisfactory user experiences as the LLM GenAI App may generate irrelevant or incorrect responses.

The Solution: A Predefined in memory data structure

To mitigate this problem, we can create a predefined memory data structure. This memory data structure will contain the common improper queries as keys and the appropriate responses as values. When a user input matches a key in the memory data structure, the LLM GenAI App will return the corresponding value as the response.

Apart from above step, a proper query follows the defined path as well saved in memory data structure in regular interval just to save time for the same query next time.

-

This approach has several benefits:

-

1. Improved User Experience: By providing accurate responses to improper queries, we enhance the user's interaction with the LLM GenAI App.

-

2. Efficiency: The LLM GenAI App can instantly look up the response from the memory data structure, making the process faster.

-

3. Flexibility:The memory data structure can be easily updated or expanded as we identify new improper queries.

-

Implementing the Memory data structure

The implementation of the memory data structure will depend on the specific LLM GenAI App platform and programming language you are using. However, the general process involves:

-

-

1. Identifying common improper queries from user interactions.

-

2. Crafting appropriate responses for these queries.

-

3. Storing these query-response pairs in a memory data structure.

-

4. Updating the LLM GenAI App's response mechanism to check the memory data structure before generating a response.

-